Multiverse Computing, a leader in AI model compression, has launched the LittleLamb open-source model family on Hugging Face. This release features three ultra-compact AI models—LittleLamb 0.3B, LittleLamb 0.3B Tool-Calling, and LittleLamb 0.3B Mobile—specifically designed for edge, mobile, and offline deployment. The models, developed with Multiverse’s proprietary CompactifAI technology, are available for free as the company aims to enhance real-world AI applications while maintaining a smaller footprint.

Each model in the LittleLamb family has been compressed to approximately half the size of the original Qwen3-0.6B architecture, allowing for efficient inference with reduced latency and resource usage. All models support bilingual English and Spanish, providing developers with dual inference modes: a “thinking mode” for complex reasoning tasks like math and science, and a “non-thinking mode” that prioritizes speed for general dialogue.

According to Enrique Lizaso Olmos, CEO of Multiverse Computing, the launch of LittleLamb underscores the company’s commitment to making efficient AI accessible across diverse deployment environments. “With CompactifAI, we’ve demonstrated that compression doesn’t require sacrificing intelligence or capability,” he stated. The models are engineered to be not just lightweight but effective in various environments, challenging the notion that advanced AI must rely heavily on cloud infrastructure.

The three models offer distinct features tailored for specific use cases. LittleLamb 0.3B serves as a versatile bilingual model suitable for conversational AI, virtual assistants, and basic Q&A applications. In contrast, LittleLamb 0.3B Tool-Calling has been fine-tuned for tasks requiring API interactions and structured outputs, making it ideal for developers looking to integrate AI into automation pipelines. LittleLamb 0.3B Mobile, on the other hand, is optimized for resource-constrained environments and targets on-device assistants and offline applications.

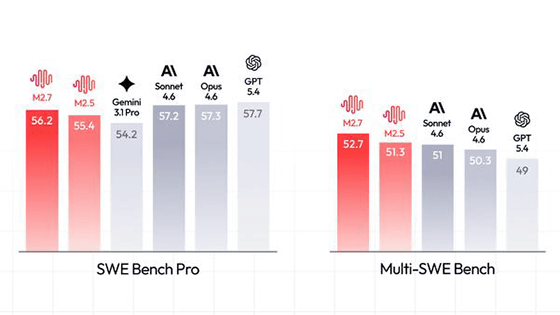

Performance metrics reveal that both LittleLamb 0.3B and LittleLamb 0.3B Tool-Calling surpass the original Qwen3-0.6B model and demonstrate better results than many models in the Gemma 270M class on HLE testing. This performance improvement reflects Multiverse’s ongoing efforts to develop compressed models that remain competitive relative to more substantial architectures. The new models also enhance system throughput, output speed, and TTFT benchmarks, ensuring reliability in various applications.

Multiverse’s CompactifAI technology, which applies quantum-inspired tensor network mathematics, allows for model size reduction of up to 95% with minimal precision loss of only 2–3%. This contrasts sharply with the industry standard, where compression often results in a 20–30% decrease in accuracy at similar rates. Such advancements enable the deployment of AI in lighter, more accessible forms in mobile and edge environments.

With the introduction of the LittleLamb model family, Multiverse is making significant strides in edge-native AI, expanding its portfolio of open-source models aimed at increasing the practicality of advanced AI for developers. The availability of these models addresses a growing demand for AI that is not only theoretically accessible but also practical for real-world applications, especially in scenarios where privacy, latency, or computing power are critical concerns.

Developers interested in exploring Multiverse Computing’s offerings can access all released models on Hugging Face at https://huggingface.co/MultiverseComputingCAI. The specific models, including LittleLamb 0.3B, Tool-Calling, and Mobile, are available through direct links provided on the platform. For additional technical details, documentation, and integration guides, users are encouraged to visit the company’s Hugging Face page and official website, https://huggingface.co/MultiverseComputingCAI.

See also Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere

Germany”s National Team Prepares for World Cup Qualifiers with Disco Atmosphere 95% of AI Projects Fail in Companies According to MIT

95% of AI Projects Fail in Companies According to MIT AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032

AI in Food & Beverages Market to Surge from $11.08B to $263.80B by 2032 Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs

Satya Nadella Supports OpenAI’s $100B Revenue Goal, Highlights AI Funding Needs Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility

Wall Street Recovers from Early Loss as Nvidia Surges 1.8% Amid Market Volatility