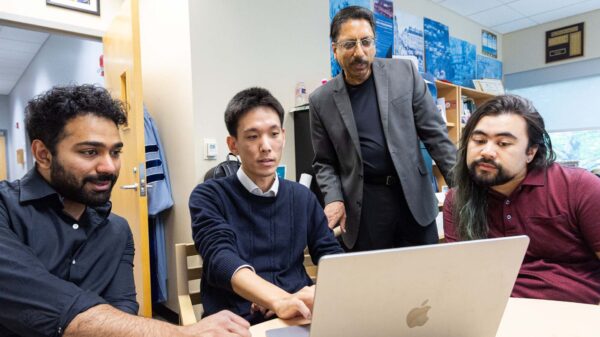

Heriot-Watt University has issued a cautionary study indicating that businesses integrating generative AI into their machine learning systems may face heightened risks of cyber-attacks, data breaches, and algorithmic bias. The research, led by Professor Michael Lones from the university’s School of Mathematical and Computer Sciences, highlights the potential dangers associated with this rapidly evolving technology.

The paper investigates the utilization of generative AI in designing, constructing, and managing machine learning systems across various sectors, including finance, insurance, and healthcare. It asserts that the incorporation of large language models can introduce concealed risks that organizations might find challenging to identify, secure, or clarify.

Machine learning has been traditionally employed to discern patterns in data, aiding decisions ranging from spam filtering and product recommendations to fraud detection and insurance claims processing. However, the recent trend of integrating generative AI into these systems has outpaced the understanding of the associated trade-offs, according to the study.

Professor Lones examined four primary applications of generative AI in machine learning workflows: as a component in machine learning pipelines, for designing and coding those pipelines, to generate synthetic training data, and to analyze outputs. The study identifies risks linked to each use case, particularly emphasizing that these risks amplify when large language models are repeatedly utilized within a single system.

The research also raises alarms about so-called agentic models, which can autonomously use external tools to execute tasks. In these scenarios, the interactions among different AI components may become increasingly unpredictable, making it harder for developers and businesses to maintain oversight.

A significant concern highlighted in the paper is the propensity of large language models to err, producing false information and suboptimal decisions. The opacity of these systems complicates the evaluation of their accuracy and the rationale behind specific outcomes.

This opacity poses particular challenges in regulated sectors, where businesses are often required to demonstrate the reliability of automated systems and explain decision-making processes. The study points to fields like medicine and finance, where transparency and accountability are paramount.

Bias is another critical issue raised. Undetected flaws in generative AI-enhanced systems could lead to inequitable outcomes for marginalized groups, particularly in contexts where machine learning influences decisions affecting health, financial stability, or day-to-day livelihoods.

The pressure to reduce costs is a backdrop to this trend, as many organizations explore generative AI as a means of cutting expenses and automating tasks in data and software processes. However, the study cautions that the pursuit of savings may introduce new technical and legal vulnerabilities.

In comments accompanying the research, Professor Lones outlined the importance of being cognizant of the risks associated with generative AI in machine learning. “Machine learning developers need to be aware of the risks of using Gen AI in machine learning and find a sensible balance between improvements in capability and the risks that might come with that,” he stated. He emphasized that just because a task can be automated through generative AI does not mean it should be.

Lones also cautioned against the overcomplication of workflows by incorporating multiple layers of generative AI. “If you have Gen AI working in a number of different ways within your machine learning workflows or system, then they can interact in unpredictable and hard-to-understand ways,” he warned. His advice is to minimize complexity, especially in high-stakes sectors that impact individuals’ lives.

The findings contribute to an ongoing dialogue about the rapid adoption of generative AI tools, which is often outpacing the development of governance, testing, and compliance frameworks. Companies in heavily regulated industries are under increasing scrutiny regarding the decision-making processes of automated systems, their data handling, and their overall impact on customers.

The challenges extend beyond whether generative AI technology functions effectively; they also involve whether errors, bias, or security vulnerabilities can be identified before they inflict harm. The research suggests that as AI systems grow more intricate and less transparent, addressing these issues becomes even more critical.

Lones stresses that the implications extend beyond developers and businesses to the general public. “In areas like medicine or finance, there are laws about being able to show that the machine learning system is reliable, and that you can explain how it reaches decisions,” he noted. As soon as large language models are introduced, demonstrating reliability becomes increasingly difficult. He urged the public to remain informed about the limitations of generative AI systems, noting that while companies may leverage these technologies to reduce operational costs and enhance user experiences, such moves could inadvertently lead to negative consequences, including bias and unfairness.

See also AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media

AI Study Reveals Generated Faces Indistinguishable from Real Photos, Erodes Trust in Visual Media Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics

Gen AI Revolutionizes Market Research, Transforming $140B Industry Dynamics Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains

Researchers Unlock Light-Based AI Operations for Significant Energy Efficiency Gains Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership

Tempus AI Reports $334M Earnings Surge, Unveils Lymphoma Research Partnership Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions

Iaroslav Argunov Reveals Big Data Methodology Boosting Construction Profits by Billions