Nvidia has redefined the landscape of AI processors at its GTC 2026 conference by integrating Groq’s dataflow technology into its Vera Rubin platform as a coprocessor. This strategic move addresses an immediate bandwidth challenge while simultaneously unveiling a long-term concern: the looming limitations of the von Neumann architecture. Alternative processor families, specifically photonic and neuromorphic technologies, are advancing more swiftly than industry experts anticipated. While neither currently challenges Nvidia’s dominance, both are nearing a critical point where power constraints in data centers may necessitate their adoption.

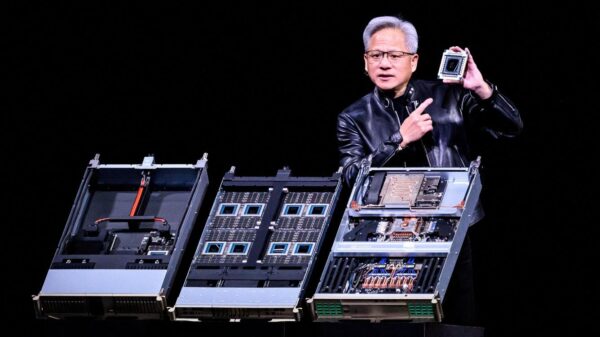

The acquisition of Groq’s intellectual property and its rapid incorporation into Vera Rubin has provided Nvidia with a temporary solution to its bandwidth issues. CEO Jensen Huang noted, “If you wanted to have services that deliver not 400 tokens per second, but a thousand tokens per second, NVLink72 runs out of steam, and you simply can’t get there. We just don’t have enough bandwidth. And so, this is where Groq comes in.” Groq’s latest technology features LPUs that prioritize speed over memory capacity, offering 150 TB/s bandwidth despite just 500 MB of SRAM per chip. A fully equipped 256-LPU rack can achieve a remarkable 40 PB/s, enabling high-throughput inference on expansive contexts. While this integration addresses current limitations, the bandwidth barrier remains a moving target, likely to be encountered again in the near future.

Improvements in dataflow and compute-in-memory are expected to enhance AI workloads, yet the von Neumann architecture, even with Groq’s enhancements, has a ceiling. Simultaneously, two alternative processor families are evolving outside of mainstream attention: photonic and neuromorphic processors. While differing significantly in their physics and target markets, both are developing faster than projected, presenting a potential threat to traditional architectures.

Photonic AI processors harness a physics advantage that is both real and scientifically validated. In the optical domain, matrix-vector multiplication incurs nearly zero switching energy, as computation occurs through light propagation—resulting in negligible resistive heat generation. Q.ANT claims its processors achieve 30 times the energy efficiency of conventional chips, while Neurophos’ Tulkas T100 aims for 300 to 350 TOPS/W. Although these figures are pre-commercial, they stem from substantial scientific principles rather than mere marketing claims. For matrix-heavy tasks such as LLM prefill inference, a photonic coprocessor operating at 30 W could significantly reduce costs compared to a GPU consuming 700 to 1,000 W.

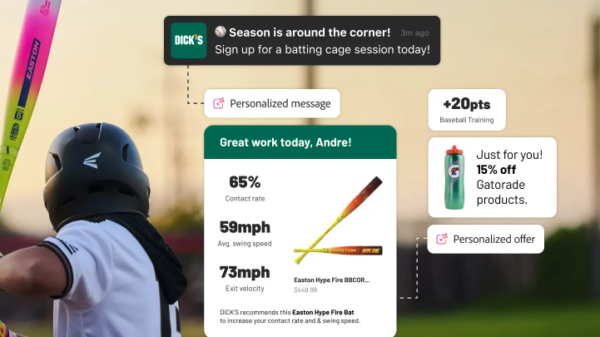

Despite its energy advantages, switching to photonic coprocessing entails substantial software and deployment modifications. Nvidia’s Vera Rubin excels in programmability, precision, and memory bandwidth, supporting an extensive suite of AI frameworks. In contrast, Groq provides a more directly competitive architecture to photonic processors, both prioritizing inference efficiency over training flexibility. Groq’s LPUs are commercially available and capable of running Llama-class models at over 1,300 tokens per second, free from the optical-to-electronic conversion overhead. However, the Tulkas T100, with its 768 GB of HBM memory, offers a competitive edge for larger models, although it is not expected to ship until 2028.

In this evolving landscape, the correct perspective recognizes photonic processors as coprocessors alongside GPUs, rather than as outright replacements. The pivotal moment will arrive when data center operators must choose between building new power infrastructure to accommodate more Nvidia racks or integrating photonic coprocessors that deliver equal inference throughput at a significantly reduced energy cost. Data suggests this decision point may be approaching faster than analysts previously expected.

Neuromorphic processors present a more complex scenario, as they do not embody a single architecture. This distinction is crucial, especially as neuromorphic designs diverge sharply across various types, including spiking neural networks (SNNs) and analog in-memory computing. SNNs, such as Intel’s Loihi 2 and BrainChip’s Akida, compute only upon neuron firing, consuming minimal energy but struggling with dense transformer inference tasks. On the other hand, analog in-memory computing, represented by technologies like IBM’s NorthPole, enhances energy efficiency by performing matrix multiplications directly in memory cells. While BrainChip targets low-power edge inference applications, it is outside the scope of Nvidia’s primary market focus.

The core challenge for neuromorphic processors in the LLM sector lies in their architectural limitations; current designs are ill-suited for the dense, high-precision demands of transformer models. No neuromorphic chip has yet demonstrated efficiency with GPT-class or Llama-class models, leaving Groq as the only viable competitor in high-throughput token generation.

The distinctions between photonic and neuromorphic technologies are clear. Photonic processors are adept at handling the same workloads as GPUs, primarily focused on dense matrix multiplications for transformer inference. Neuromorphic processors excel in fundamentally different applications involving sparse, event-driven data. While both categories may intersect in specific applications, such as server-side CNN inference, they cater to separate markets. As such, neither technology is poised to displace Nvidia in the immediate future.

Ultimately, Nvidia’s integration of Groq is a tactical measure that provides a temporary reprieve from bandwidth constraints. With the photonic processors targeting similar inference workloads as GPUs, their entry into data centers is plausible as power budgets approach their limits. Neuromorphic technology remains focused on fundamentally different workloads, positioning it in a separate domain from Nvidia’s core operations. While photonic processors may pose a more significant long-term challenge to Nvidia’s economics, the existing software ecosystem gap remains a critical barrier. Huang’s acknowledgment at GTC that NVLink72 “runs out of steam” represents a pivotal moment in AI processor architecture. The traditional approach of scaling through transistors and memory bandwidth is nearing its limits, with both photonic and neuromorphic technologies emerging as architectural candidates for the future. The race to create software ecosystems that complement innovative silicon is set to determine the leaders in the next phase of AI development.

See also Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity

Tesseract Launches Site Manager and PRISM Vision Badge for Job Site Clarity Affordable Android Smartwatches That Offer Great Value and Features

Affordable Android Smartwatches That Offer Great Value and Features Russia”s AIDOL Robot Stumbles During Debut in Moscow

Russia”s AIDOL Robot Stumbles During Debut in Moscow AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse

AI Technology Revolutionizes Meat Processing at Cargill Slaughterhouse Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech

Seagate Unveils Exos 4U100: 3.2PB AI-Ready Storage with Advanced HAMR Tech