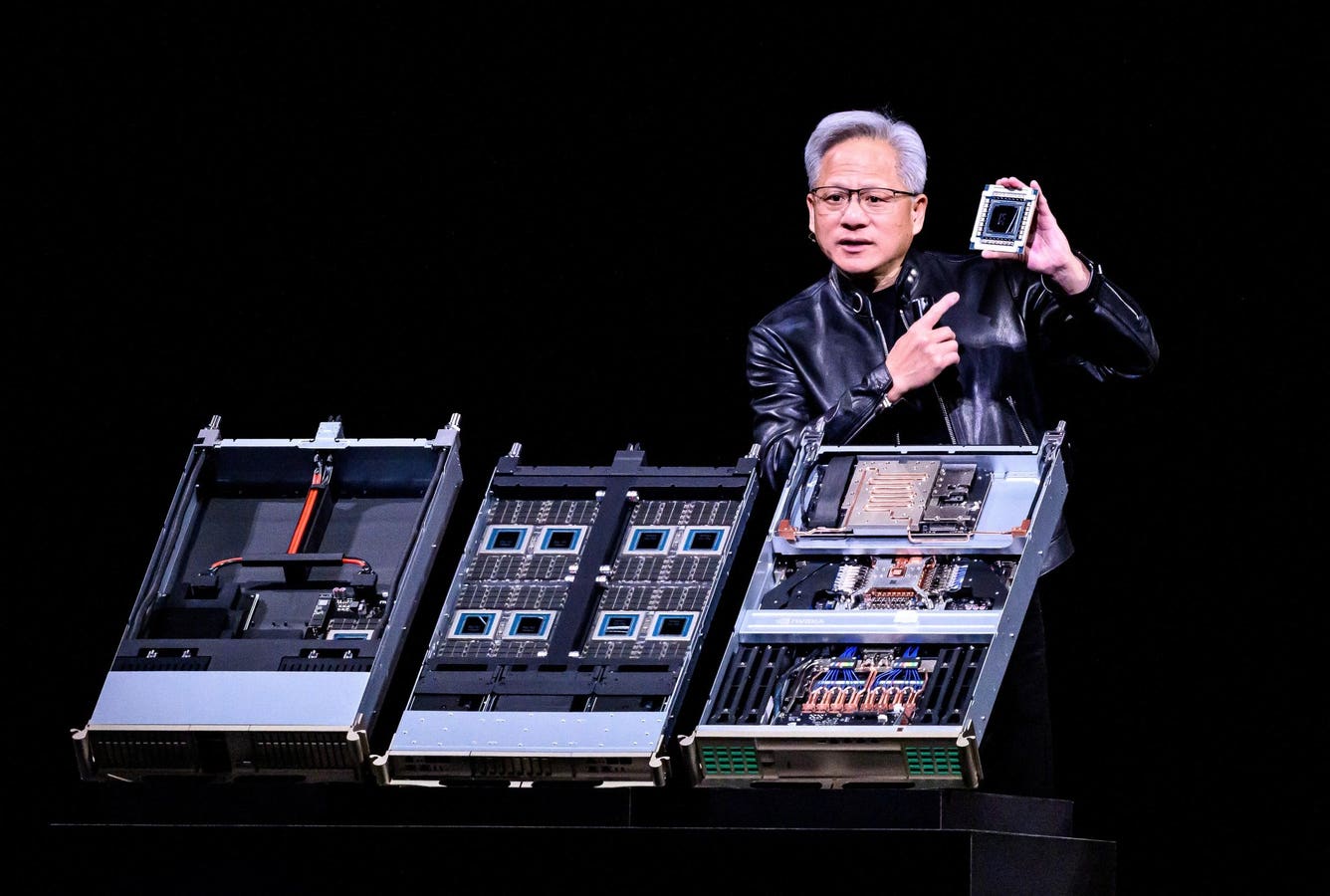

Nvidia CEO Jensen Huang unveiled the next-generation AI data center platform, Vera Rubin, during a keynote address at the company’s annual GTC developers conference in San Jose, California, on March 16, 2026. In a striking claim, Huang stated, “I believe that computing demand has increased by one million times in the last two years.” He projected a staggering one trillion dollars in demand for Nvidia’s Blackwell and Vera Rubin systems through 2027, effectively doubling the company’s previous estimate of $500 billion from just a year prior. However, in the weeks following the announcement, evolving details suggest that this headline figure may quickly become outdated and represent a moving target.

The pivotal update lies not merely in Nvidia’s one trillion dollar forecast, but in the accelerating pace of demand growth. At GTC, Huang emphasized that compute demand has surged “off the charts,” indicating an unprecedented increase in recent years. This rapid growth suggests that while the trillion-dollar figure appears substantial, it could soon be revised upward again.

Nvidia is now scaling its operations in alignment with the broader expansion of artificial intelligence rather than adhering to traditional semiconductor cycles. The arrival of new technologies often complicates predictions of future market sizes, as they catalyze widespread adoption and attract new users. For instance, while programming with AI is currently the domain of software engineers, non-technical users, such as business analysts, are expected to enter the landscape in increasing numbers. This phenomenon exemplifies how breakthrough technologies like AI not only enhance their own adoption but also broaden the overall market size.

A notable shift in Nvidia’s focus is its pivot from training large models to prioritizing inference at scale—essentially the real-time execution of AI systems. Huang boldly declared, “The inference inflection has arrived,” explaining that AI now has the capacity to engage in tasks that require cognitive functions, such as reading and executing productive work. As these agentic AI systems gain momentum, AI workloads are transforming into persistent operations that continuously generate outputs and make decisions, reshaping Nvidia’s entire product roadmap. The company has introduced new architectures specifically aimed at enhancing real-time AI processing, designed to work alongside GPUs to improve latency and token throughput.

This marks a significant repositioning for Nvidia, as it not only seeks to maintain its leadership in training but is also determined to dominate the inference segment, which is poised to drive most of the long-term demand in the AI sector.

Vera Rubin’s Impact

The Vera Rubin platform is set to enter production scale, with systems anticipated to roll out across cloud infrastructures in the latter half of 2026. Bernstein analysts have estimated that Vera Rubin may achieve around five times better inference performance and 3.5 times stronger training performance compared to existing systems. More than just a faster chip, Vera Rubin embodies a comprehensive system architecture designed to facilitate what Nvidia calls AI factories—massive, always-on compute environments optimized for inference-heavy workloads.

Nvidia’s recent announcements illustrate the extent of its commitment to this model, including new rack-level systems and CPUs tailored for agentic AI. The integrated architectures developed by Nvidia combine GPUs, networking, and storage into a single cohesive platform. Concurrently, the company is addressing critical bottlenecks in AI infrastructure, focusing on data movement to eliminate constraints related to context memory and token throughput, thereby enhancing efficiency for large-scale inference workloads.

Despite Nvidia’s dominant position, the competitive landscape is evolving. Alternative inference providers are gaining traction, with reports indicating that Google is exploring a partnership with Marvell to develop chips for AI inference, alongside its existing tensor processing units. Hyperscalers continue to invest in custom silicon, while AI companies are diversifying their compute strategies.

In this rapidly changing environment, one constraint persists: the supply of AI resources remains inadequate to meet soaring demand. While Nvidia is actively ramping up production, customers across hyperscalers and enterprises are vying for limited access to compute resources. This imbalance is not a fleeting issue, but a defining characteristic of the current AI landscape, where compute has become a limiting factor for market growth. Huang’s ambitious trillion-dollar projection may eventually be revised upward, but the real narrative is that the industry is scaling faster than even Nvidia anticipated. In this dynamic environment, companies that control computing resources will not merely participate in the next phase of AI; they will shape it.

See also Google Launches Lyria 3 Music Generator, Enhancing AI Creativity with Multimodal Inputs

Google Launches Lyria 3 Music Generator, Enhancing AI Creativity with Multimodal Inputs Too Good To Go Achieves 90% Accuracy in Retention Insights Using Mistral AI

Too Good To Go Achieves 90% Accuracy in Retention Insights Using Mistral AI AI Video Landscape Shifts as Grok, Kling, and Runway Redefine Industry Dynamics

AI Video Landscape Shifts as Grok, Kling, and Runway Redefine Industry Dynamics Apple Names John Ternus CEO, Faces Pressure on Delayed AI Initiatives

Apple Names John Ternus CEO, Faces Pressure on Delayed AI Initiatives Chinese AI Models Surpass U.S. in Downloads, Led by DeepSeek’s R1 with 17.1% Share

Chinese AI Models Surpass U.S. in Downloads, Led by DeepSeek’s R1 with 17.1% Share