New research from scientists at Anthropic and ETH Zurich reveals that modern artificial intelligence systems may be capable of uncovering the real identities behind anonymous online accounts. Published as a preprint on arXiv, the study demonstrates that large language models (LLMs) can analyze online behavior and connect pseudonymous profiles with actual individuals, potentially at scale.

Titled “Large-scale online deanonymization with LLMs,” the research focuses on how AI systems can automate the process of deanonymization—linking anonymous online accounts to their real-world identities. Historically, this intricate task required extensive manual investigation, where analysts sifted through posts, writing styles, and various digital clues. The researchers’ findings suggest that advanced AI models can perform many of these tasks automatically, enhancing efficiency.

In the study, the AI analyzed public text from various online platforms, extracting identity-related signals such as personal interests, demographic hints, writing styles, and incidental details contained in posts. The AI then scoured the internet for matching profiles, assessing whether the inferred clues corresponded with known individuals.

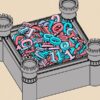

To evaluate their method, the researchers created multiple datasets containing known identities. One experiment involved matching users from Hacker News to their LinkedIn profiles, even after obvious identifiers like names and usernames were omitted. Another dataset focused on linking pseudonymous accounts across different Reddit communities. A third experiment divided a single user’s posting history into two separate profiles to assess whether the AI could discern that they belonged to the same person.

The results indicated that LLM-based systems significantly outperformed traditional deanonymization techniques, achieving up to 68% recall with approximately 90% precision. This means the AI was able to accurately identify a substantial number of accounts while maintaining a relatively low error rate, whereas conventional methods yielded nearly zero success in similar tests.

The researchers noted that these findings underscore how AI can replicate tasks previously requiring hours of work from human investigators. An AI system can automatically extract relevant characteristics from text, search for potential matches among thousands of profiles, and evaluate which candidate is most likely to be correct.

This advancement raises significant concerns regarding online anonymity, a vital protection for many users, including journalists, whistleblowers, activists, and ordinary individuals wishing to discuss sensitive topics without revealing their identities. The study suggests that this essential layer of protection—often referred to as “practical obscurity”—may be diminishing as AI systems become increasingly adept at connecting digital clues across various platforms. If automated tools can rapidly and economically conduct this work, the barrier to identifying anonymous users could be significantly lowered.

Researchers estimate that the cost of identifying an online account using their experimental approach could be between $1 and $4 per profile, making large-scale investigations feasible at a relatively low cost. However, they caution that the study was conducted in controlled environments using public data. The findings have not yet undergone peer review, and the researchers intentionally withheld certain technical details to mitigate the risks of misuse.

Despite these precautions, the results have ignited debate among privacy advocates and technologists. The implications suggest that individuals may need to reconsider the amount of personal information they share online, even in spaces that seem anonymous. Looking ahead, the researchers emphasize the need for further exploration of both the risks associated with AI-powered deanonymization and potential defenses against it. This could involve enhancing privacy tools, improving platform safeguards, or developing AI systems designed to anonymize sensitive data before public sharing.

As artificial intelligence continues to evolve and its capabilities grow, this study highlights an escalating challenge: striking a balance between the power of AI-driven discovery and the imperative to protect personal privacy in the digital landscape.

See also UK Launches £40M Fundamental AI Research Lab to Tackle Core Challenges and Drive Breakthroughs

UK Launches £40M Fundamental AI Research Lab to Tackle Core Challenges and Drive Breakthroughs Microsoft Combines Quantum Computing and AI to Revolutionize Chemical Research Accuracy

Microsoft Combines Quantum Computing and AI to Revolutionize Chemical Research Accuracy AMD Launches AI Research Lab at University of Toronto, Initiates 100 Projects in 3 Years

AMD Launches AI Research Lab at University of Toronto, Initiates 100 Projects in 3 Years Anthropic Study Reveals AI Could Replace 94% of Computer Jobs, Automation Remains Limited

Anthropic Study Reveals AI Could Replace 94% of Computer Jobs, Automation Remains Limited Mining Sector Embraces AI for Predictive Maintenance, Enhancing Safety and Efficiency

Mining Sector Embraces AI for Predictive Maintenance, Enhancing Safety and Efficiency