Multimodal Perception and Reasoning Bring Physical AI Closer to Reality

As artificial intelligence progresses, the focus is shifting from enhancing large language models (LLMs) to enabling AI to function effectively in the real world. This evolution is driving the development of Physical AI, a sector defined by its potential to transform AI from a digital advisor into a practical workforce. The core challenge now lies in creating systems that can operate effectively within physical environments, leveraging real-time computing and advanced interactive capabilities.

Physical AI systems are designed to engage deeply with their surroundings through various sensing technologies. By continuously learning from their interactions, these systems develop a nuanced understanding of the world, forming a feedback loop that allows them to observe, comprehend, and act upon commands. This transition from virtual to physical is fueled by breakthroughs in multimodal model technologies, which are becoming critical in the competitive landscape as we approach 2026.

The integration capabilities of multimodal models facilitate a seamless connection between visual recognition, language understanding, and action execution. For instance, a household robot tasked with “carrying the soup to the living room” must navigate numerous challenges such as preventing spills and avoiding obstacles. This complexity necessitates the coordination of multiple models, emphasizing the increasing demand for real-time computational power as deployment scenarios become more intricate.

High-performance computing capabilities are no longer merely a showcase of power; they are essential for intelligent agents to function safely in real-world contexts. A delay of even a millisecond can lead to critical safety hazards in physical environments, underscoring the importance of efficient processing. This requirement is driving the development of hardware that balances substantial AI performance with low power consumption and reliability.

In response to these demands, NVIDIA has launched the Jetson AGX Thor, a cutting-edge computing platform tailored for Physical AI applications, including robotics and autonomous systems. This robust AI processor merges sensing, inference, and control within a unified framework, allowing it to execute complex tasks that involve both high-level reasoning and low-level motion control.

The Jetson Thor architecture facilitates the simultaneous operation of large model inference and high-frequency control loops, meeting stringent functional safety and real-time operational requirements. NVIDIA’s emphasis on hardware-software co-design enhances its competitive edge in the evolving Physical AI landscape, lowering barriers for multimodal systems and expediting their path to widespread implementation.

As we look ahead, the integration of autonomy into Physical AI represents a significant frontier. Humanoid robots are projected to enter domestic applications by 2026, raising pressing concerns regarding safety, data privacy, and latency in data transmission. This context is driving a notable shift toward edge computing, where AI systems process data locally to meet stringent real-time requirements.

Current Edge AI applications have moved beyond basic visual recognition to encompass interactive scenarios that combine data from speech, vision, and other modalities. In various sectors, including public services and smart retail, industrial PCs and embedded platforms are increasingly becoming the backbone of multimodal frameworks. The focus has shifted from merely demonstrating computing power to delivering integrated, real-world solutions that encompass everything from sensing modules to operational frameworks.

This evolution is fostering the development of Agentic AI architectures within edge devices, enabling them to perform perception, decision-making, and action independently. The combination of NVIDIA Jetson Thor and advanced multimodal models equips robots with enhanced sensory capabilities and precise control over their movements. However, the challenge remains in achieving true autonomous decision-making capabilities.

The next stage in the evolution of Physical AI revolves around developing intelligent agents that can recognize objectives, break down tasks, and adapt strategies dynamically. In this ecosystem, sensing systems gather physical data, multimodal models interpret it, and agents execute decisions, resulting in smart edge devices that can act autonomously. This marks a pivotal transition from AI being a passive executor to becoming an active participant in its environment.

NVIDIA Jetson Thor lays the groundwork for real-time computation essential for this transformation, while intelligent agents will tackle higher-order cognitive functions, such as understanding intent and planning autonomously. The journey of Physical AI is just beginning; the real turning point will come when these systems can comprehend their goals and make informed choices about their actions.

(Photo credit: FREEPIK)

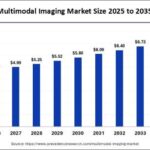

See also Multimodal Imaging Market to Exceed $7.43 Billion by 2035, Driven by AI Innovations

Multimodal Imaging Market to Exceed $7.43 Billion by 2035, Driven by AI Innovations OpenAI’s Generative AI Users Face Potential Copyright Liability Amid Legal Uncertainties

OpenAI’s Generative AI Users Face Potential Copyright Liability Amid Legal Uncertainties Suno and Udio Settle $500M Lawsuits with Major Labels as AI Music Industry Evolves

Suno and Udio Settle $500M Lawsuits with Major Labels as AI Music Industry Evolves Stanford’s Iro Armeni Reveals Innovative Techniques in 3D Vision Models and Generative AI

Stanford’s Iro Armeni Reveals Innovative Techniques in 3D Vision Models and Generative AI OpenAI Launches GPT-5.4 Mini and Nano for Free Users with 2x Speed Boost

OpenAI Launches GPT-5.4 Mini and Nano for Free Users with 2x Speed Boost