OpenBox AI has officially launched its governance platform, unveiling the service alongside a $5 million seed funding round led by Tykhe Ventures. The platform aims to manage and secure enterprise AI systems in response to increasing regulatory demands in the United States and Europe.

The timing of OpenBox’s launch comes as the conversation around AI governance transitions from a theoretical discussion to a regulatory necessity. Recent developments, including the White House’s National AI Legislative Framework and the implementation of the EU AI Act for high-risk systems, are compelling enterprises to adopt more stringent controls over their AI deployments. These frameworks prioritize safeguards against fraud, protection of intellectual property, and accountability in decision-making processes driven by AI.

OpenBox positions itself as an essential infrastructure for the governance of AI agents—autonomous systems capable of accessing data, executing workflows, and making decisions without human oversight. With projections indicating that task-specific AI agents could be embedded in 40% of enterprise software by the end of 2026, organizations face increasing pressure to ensure transparency, compliance, and effective risk management.

The platform emphasizes governance enforcement at the point of execution, rather than relying solely on retrospective monitoring. Its key features include real-time audit trails, cryptographic attestation, and human oversight controls for critical decisions. Additionally, it employs dynamic risk scoring that adjusts based on agent behavior, along with cognitive behavior analysis to identify deviations in AI objectives before they evolve into operational risks.

OpenBox’s compatibility with existing enterprise tools and AI development frameworks—including Temporal, n8n, Mastra, LangChain, AWS, and Cursor—allows companies to implement governance measures without overhauling their existing architectures. At launch, the platform is available without usage limits, with optional paid features for advanced support.

The company was founded by Asim Ahmad and Tahir Mahmood, who both have backgrounds in regulatory issues and large-scale system development, having previously worked at BlackRock and Microsoft, respectively. OpenBox has already begun to attract interest from enterprise customers across diverse sectors including logistics, healthcare, and media. The platform has also been selected for the Accenture FinTech Innovation Lab London 2026 cohort, marking a significant step in its early traction.

The broader mission of OpenBox is to democratize access to enterprise-grade AI governance, making compliance and oversight tools available to organizations of all sizes as the global adoption of AI accelerates.

“The regulatory reckoning for AI agents is no longer a future event — it is happening now. Enterprises that cannot demonstrate governance of their AI systems face real and growing exposure,” said Prashant Malik, Co-creator of Apache Cassandra and General Partner at Tykhe Ventures. “We backed OpenBox because the team combines the technical depth to build this right with the regulatory experience to understand precisely what enterprises and regulators require. That combination is rare, and the window to define this category is now.”

Ahmad emphasized the rapid democratization of AI agents, stating, “AI agents are being democratized at remarkable speed. The infrastructure to trust them should be too. We built OpenBox so that a five-person fintech in Lagos and a 50,000-person bank in London both have access to the same enterprise-grade AI governance.”

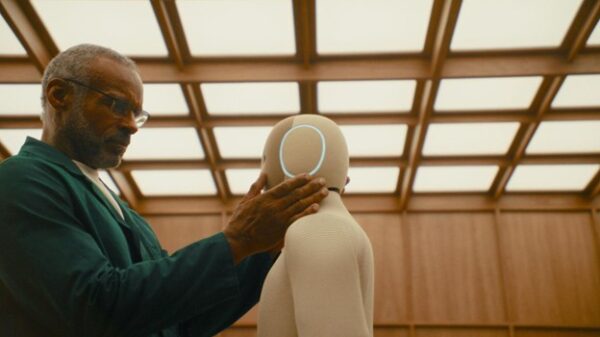

Mahmood added, “Every AI agent is an autonomous system capable of accessing data, calling APIs, and making decisions without human review. As enterprises deploy hundreds of these agents, the governance challenge becomes existential — and the tools to address it have simply not existed until now.”

As AI technologies continue to evolve and permeate various sectors, the availability of robust governance frameworks will be pivotal in shaping how organizations navigate compliance and risk in an increasingly automated world.

See also UK SMEs Prioritize Data & AI Over Compliance, 28% Focus on Digitalization for 2026

UK SMEs Prioritize Data & AI Over Compliance, 28% Focus on Digitalization for 2026 CookieYes Launches Cookie Policy Generator to Streamline Compliance for 80% of Global Users

CookieYes Launches Cookie Policy Generator to Streamline Compliance for 80% of Global Users Poland Approves Draft AI Regulation Bill to Enhance Security and Support Innovation

Poland Approves Draft AI Regulation Bill to Enhance Security and Support Innovation Startups Leverage Hybrid Human-AI HR Teams to Boost Compliance and Streamline Hiring

Startups Leverage Hybrid Human-AI HR Teams to Boost Compliance and Streamline Hiring Claude Code Flaws Exposed: Safety Rules Bypass Enables Prompt Injection Attacks

Claude Code Flaws Exposed: Safety Rules Bypass Enables Prompt Injection Attacks