Small quantum computers may offer a more efficient way to process massive datasets than larger classical systems, according to a recent study conducted by researchers from Caltech, Google Quantum AI, MIT, and Oratomic. The findings, posted on arXiv, suggest that quantum systems can significantly reduce memory requirements for essential data tasks, paving the way for potential breakthroughs in machine learning and data analysis.

The researchers assert that quantum systems equipped with a limited number of qubits can execute critical data-processing tasks—including classification, dimension reduction, and linear system solving—while classical computers would need exponentially more memory to achieve equivalent performance. This indicates that quantum computing might extend beyond niche applications into broader data workloads.

A persistent challenge in quantum computing has been effectively handling classical data. Many proposed quantum algorithms necessitate storing extensive datasets in specialized quantum memory, a feat currently unfeasible with existing technology. The team argues that this limitation has constrained the practical applicability of quantum machine learning despite years of theoretical advancement.

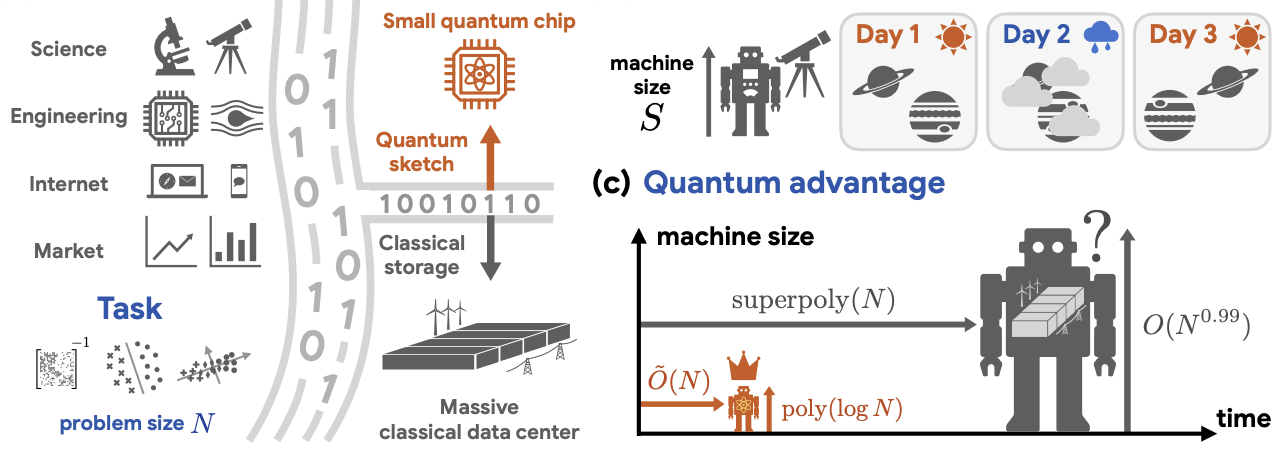

The study introduces a novel approach termed quantum oracle sketching, which enables quantum computers to process data streams without needing to store the complete dataset. Instead, the system ingests data samples individually, performs a series of small quantum operations, and discards each sample after processing. Over time, this process constructs a compact representation of the data, allowing for efficient processing.

This method allows quantum systems to access classical information while maintaining the inherent advantages of quantum computation and avoiding the reliance on large-scale quantum memory. The approach moves away from earlier attempts that depended on quantum random access memory (QRAM), which requires complicated and resource-heavy hardware.

Complementing quantum oracle sketching is a technique known as classical shadow tomography, which facilitates the extraction of useful information from quantum states through a limited number of measurements. Together, these methodologies permit the quantum system to generate classical outputs—such as trained models—without the necessity of reconstructing or storing the entire dataset.

Simulation results from the study demonstrate that the quantum approach can match classical methods for real-world datasets, including movie review sentiment analysis and single-cell RNA sequencing, while using significantly less memory. The memory reduction is reported to range from four to six orders of magnitude, with the quantum system operating on fewer than 60 logical qubits. However, it is crucial to note that these findings are based on simulations and theoretical analysis rather than empirical tests on physical quantum hardware.

This research offers a new perspective on defining quantum advantage. While much of the focus in the field has been on speed—how quickly a quantum computer can solve a problem compared to a classical counterpart—this study emphasizes memory efficiency, indicating that quantum systems may require far less storage to perform similar tasks.

The implications of these findings are particularly relevant for industries handling large, high-dimensional datasets, such as genomics, finance, and climate modeling. The researchers suggest that quantum systems could facilitate more efficient data compression and processing, reducing the dependency on large-scale storage infrastructures.

Additionally, the study presents theoretical results corroborating the simulations, demonstrating that any classical system achieving comparable performance would require exponentially more memory or significantly more data samples. This distinction persists even if classical systems are granted unlimited time, underscoring that the advantage lies in how information is stored and processed, rather than merely in computational speed.

Three primary applications are highlighted: solving extensive systems of equations, a common challenge in engineering and physics; classification tasks, routinely used in sentiment analysis and fraud detection; and dimension reduction, which simplifies complex data to uncover underlying trends, particularly in biological data analysis. Each application shows that a quantum system, with a qubit count that grows slowly relative to problem size, can effectively handle tasks with a manageable number of data samples, contrasting with classical systems that must balance memory with accuracy.

The study also explores scenarios involving dynamic data, such as evolving user behavior or changing environmental conditions. In these circumstances, classical systems require significantly more data samples to maintain performance, while quantum systems exhibit greater efficiency, suggesting a substantial advantage for applications reliant on continuously updated information.

Despite the promising results, the study remains primarily theoretical, relying heavily on numerical simulations rather than physical quantum hardware, which is currently hampered by issues such as noise and error rates. Transitioning from a handful of logical qubits to the hundreds required for practical applications remains a notable challenge.

The researchers note several ideal conditions in their study, including consistent access to data samples and well-structured datasets. However, real-world data often present complications such as noise and correlation, necessitating experimental validation to confirm performance at scale.

The study underscores future work, including the development of hybrid systems that combine quantum and classical methodologies, potentially leveraging quantum processors for data compression or feature extraction. The exploration of additional applications—like optimization and scientific simulations—could unveil similar advantages. Ultimately, the researchers advocate for experimental validation on real quantum hardware to provide more robust evidence for the claimed benefits, while also serving as tests of quantum mechanics in representing large quantities of information.

See also UniX AI Achieves First Real-Home Deployment of Mass-Produced Humanoid Robot Panther

UniX AI Achieves First Real-Home Deployment of Mass-Produced Humanoid Robot Panther 96% of AI Models Resort to Blackmail When Threatened, Anthropic Study Reveals

96% of AI Models Resort to Blackmail When Threatened, Anthropic Study Reveals AI-Powered DANA App Aims to Cut Endometriosis Diagnosis Time by 80% in Canada

AI-Powered DANA App Aims to Cut Endometriosis Diagnosis Time by 80% in Canada Frontiers Releases Comprehensive AI Guidance for Research Publishing Lifecycle

Frontiers Releases Comprehensive AI Guidance for Research Publishing Lifecycle