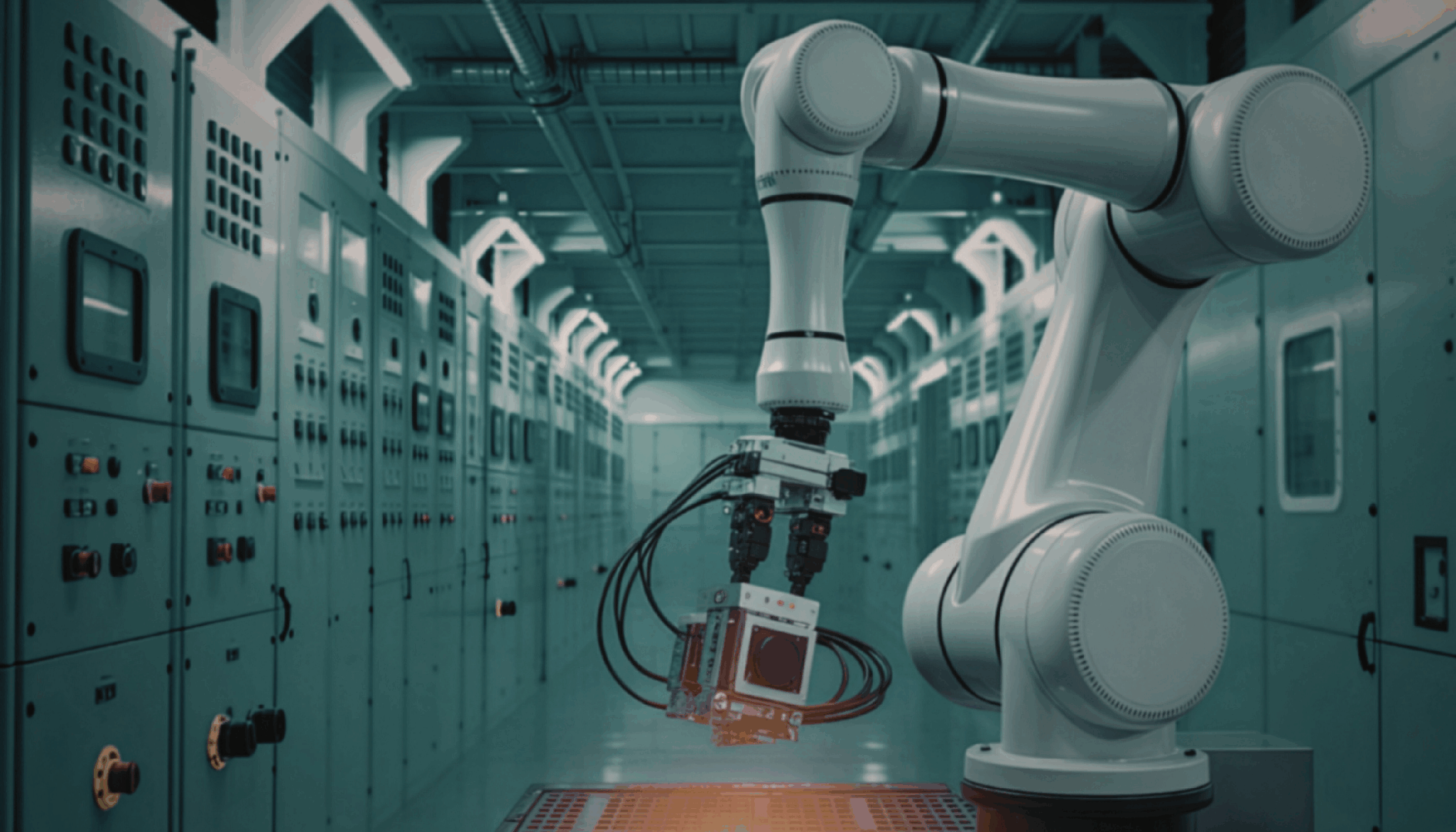

Google DeepMind has unveiled its latest advancement in robotics with the release of Gemini Robotics-ER 1.6 on April 13, 2026, aiming to bridge the longstanding gap between robotic demonstrations and real-world deployment. Developed in collaboration with Boston Dynamics, this model significantly enhances spatial and physical reasoning, which is essential for robots to navigate and interact effectively in three-dimensional environments. Available via Google AI Studio, Gemini Robotics-ER 1.6 provides startups with API access to advanced capabilities, allowing them to focus on integrating these enhancements into their applications without the need to develop a similar scale model from scratch.

This release is characterized more as a foundation for research and infrastructure development rather than a consumer product launch. Its true significance lies in the functionalities it offers. The model serves as a high-level reasoning layer that interprets visual data from cameras to make decisions about robotic actions. Instead of directly controlling robot movements, it enables a nuanced understanding of spatial relationships between objects, essentially guiding lower-level systems in executing tasks.

Improvements in version 1.6 include enhanced abilities in precise pointing, enabling better identification of spatial relationships and object interactions. The model also excels in counting occluded objects—those partially hidden from view—and synthesizes input from multiple cameras for a more comprehensive understanding of dynamic scenes. One notable feature is its capacity for instrument reading, allowing the model to interpret analog gauges and industrial instruments without requiring them to be retrofitted with digital interfaces. This capability, developed in tandem with Boston Dynamics, represents a significant step towards practical application in industrial inspections.

Another critical area of advancement is safety reasoning. Gemini Robotics-ER 1.6 shows a marked improvement over its predecessor, Gemini 3.0 Flash, by scoring six to ten percentage points higher in identifying potential hazards based on injury reports. In environments where human interaction is prevalent, such enhancements are crucial, signaling a move towards safer, more reliable robotic systems.

While benchmark results offer a glimpse into the model’s capabilities, the broader implications for the robotics industry are what truly matter. The historical trend in robotics has seen impressive performance metrics that fail to translate into effective products. Core to the evolution toward practical general-purpose robots is the idea of embodied reasoning—an ability to develop and adapt to real-time causal models of the physical world.

Gemini Robotics-ER 1.6 represents progress in that direction. Its improvements in spatial reasoning, multi-view synthesis, and instrument reading indicate a system less reliant on familiar training scenarios. Although it does not achieve complete autonomy, it marks a step toward the kind of AI-driven automation that can function in non-standard environments, a significant hurdle for the industry.

The implications of this release are twofold for startups engaged in the physical AI sector. On one hand, the availability of such a powerful foundation model through Google AI Studio lowers the barrier to entry by allowing emerging companies to build applications that leverage these advanced capabilities. This accessibility could expedite development timelines and diminish the computational resources needed to produce viable products in sectors like warehouse automation and industrial inspection.

Conversely, there is a risk that this democratization of technology may amplify competition, concentrating advantages among the most resourceful companies. As noted by BCG, the competitive edge in robotics is shifting toward those who can gather specialized domain data and integrate their hardware with software rather than merely focusing on training larger models.

Despite the promise of Gemini Robotics-ER 1.6, it is essential to temper expectations regarding its immediate impact. The challenges of deploying robots in unpredictable, unstructured environments remain significant. Benchmark performance and effective real-world operation are still distinct arenas, and issues such as hardware reliability and unencountered edge cases continue to pose risks. The insights gleaned from this model, however, provide a hopeful outlook on the trajectory of robotics. The pace of improvement in reasoning capabilities suggests an evolving landscape where robots can increasingly operate effectively in diverse environments.

As the robotics industry continues to integrate AI advancements, the focus for founders at the intersection of physical hardware and artificial intelligence will revolve around the implications of these developments, marking a crucial juncture in the evolution of robotic capabilities.

See also AI Chatbots Produce 49.6% Problematic Health Responses in New BMJ Open Study

AI Chatbots Produce 49.6% Problematic Health Responses in New BMJ Open Study Pollo AI Tops 2026 List of 5 Best AI UGC Video Generators for Scalable Ad Production

Pollo AI Tops 2026 List of 5 Best AI UGC Video Generators for Scalable Ad Production Microsoft Acquires 30,000 Nvidia GPUs in Norway, Expands Data Center in Wyoming

Microsoft Acquires 30,000 Nvidia GPUs in Norway, Expands Data Center in Wyoming AI Adoption in Investing Surges to 78% as Firms Shift to Data-Driven Decisions

AI Adoption in Investing Surges to 78% as Firms Shift to Data-Driven Decisions Supreme Court’s Cruikshank Ruling: A Lasting Blow to the Bill of Rights

Supreme Court’s Cruikshank Ruling: A Lasting Blow to the Bill of Rights