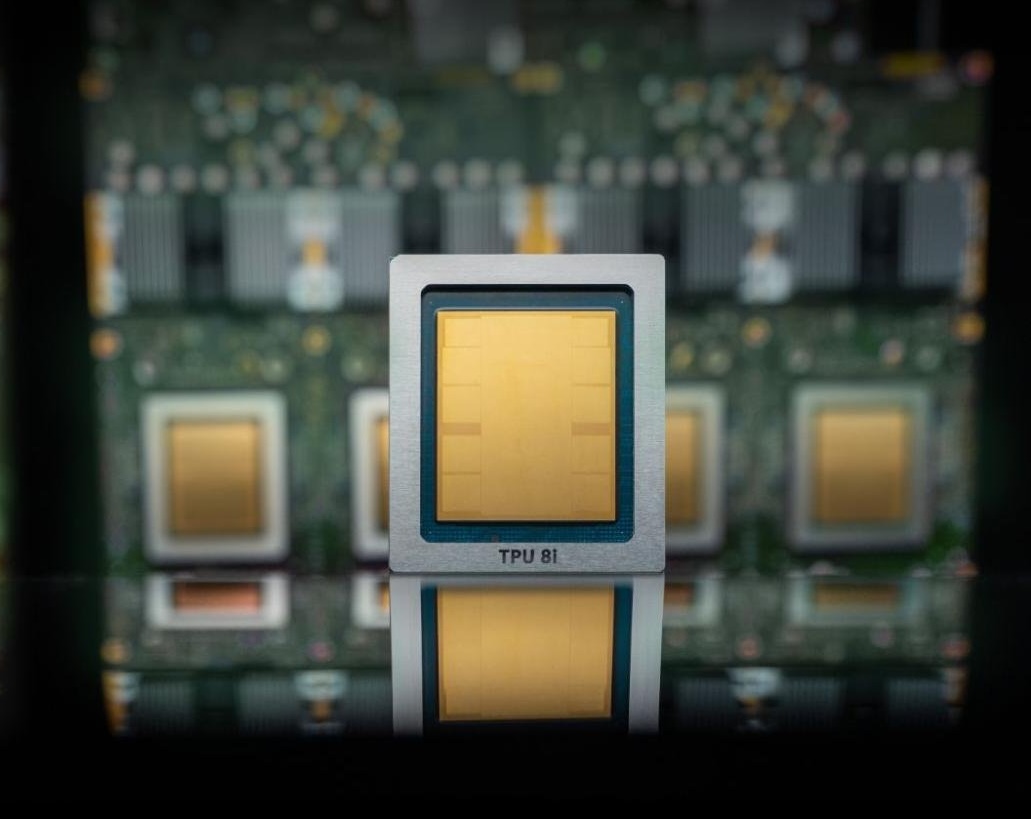

Google Cloud unveiled its eighth generation of custom-built AI chips, known as tensor processing units (TPUs), on Wednesday, delineating them into two distinct models: the TPU 8t for model training and the TPU 8i for inference tasks. This strategic segmentation aims to enhance performance while optimizing energy consumption and cost for users.

The TPU 8t is designed specifically for training AI models, while the TPU 8i focuses on inference, which involves executing tasks after users submit prompts. Google touts significant performance improvements with the new TPUs, claiming they can deliver up to three times faster AI model training, 80% better performance per dollar, and the capability to cluster over 1 million TPUs together. The implications are clear: users can expect more computational power with reduced energy expenditure and lower costs compared to earlier generations. Google refers to these chips as TPUs rather than GPUs because the original name stems from their custom low-power design tailored for tensor processing.

However, analysts caution against viewing this development as a direct challenge to Nvidia’s dominance in the chip market. Like other major cloud providers, including Microsoft and Amazon, Google is integrating its TPUs as a supplementary option alongside Nvidia-based systems rather than outright replacing them. Notably, Google has announced that its cloud infrastructure will also feature Nvidia’s latest chip, Vera Rubin, later this year, indicating a continued reliance on Nvidia’s technology.

The landscape may shift in the future as hyperscalers such as Amazon, Microsoft, and Google advance their own AI chip development, potentially diminishing their dependence on Nvidia as enterprises migrate their AI workloads to these clouds. Nevertheless, current market conditions do not favor betting against Nvidia. As chip market analyst Patrick Moore humorously noted on X, he had predicted that Google’s TPU could pose a threat to Nvidia and Intel back in 2016, coinciding with the launch of Google’s first TPU. Today, Nvidia boasts a nearly $5 trillion market capitalization, suggesting that such forecasts did not materialize as anticipated.

If Nvidia’s trajectory continues as planned, Google’s emergence as a more formidable AI cloud provider could inadvertently result in increased business for the chip maker, even if many workloads utilize Google’s proprietary chips. This dynamic underscores the complex interdependencies within the tech ecosystem.

Further emphasizing collaboration, Google announced a new partnership with Nvidia to enhance computer networking capabilities to optimize performance for Nvidia-based systems within its cloud environment. The two companies are focusing on improving Falcon, a software-based networking technology that Google introduced and open-sourced in 2023 under the auspices of the Open Compute Project, a key organization for open-source data center hardware.

With these advancements, Google aims to position itself as a leader in the AI cloud space, offering enhanced computational efficiency while maintaining essential partnerships in the industry. As the market for AI continues to evolve, the interplay between proprietary technologies and established players like Nvidia will be crucial in shaping the future landscape of cloud computing.

See also Anonymous Developer Claims 235M Parameter LLM Trained on Single RTX 5080 GPU

Anonymous Developer Claims 235M Parameter LLM Trained on Single RTX 5080 GPU Microsoft’s Azure Growth and Memory Supply Deal Positions It as a Key Player in AI Market

Microsoft’s Azure Growth and Memory Supply Deal Positions It as a Key Player in AI Market Xiaomi Launches MiMo-V2.5 Series, Achieving 50% Token Efficiency Gain Over Competitors

Xiaomi Launches MiMo-V2.5 Series, Achieving 50% Token Efficiency Gain Over Competitors Runway Launches $100K Big Pitch Contest for Original Show Ideas, Submissions Open Now

Runway Launches $100K Big Pitch Contest for Original Show Ideas, Submissions Open Now