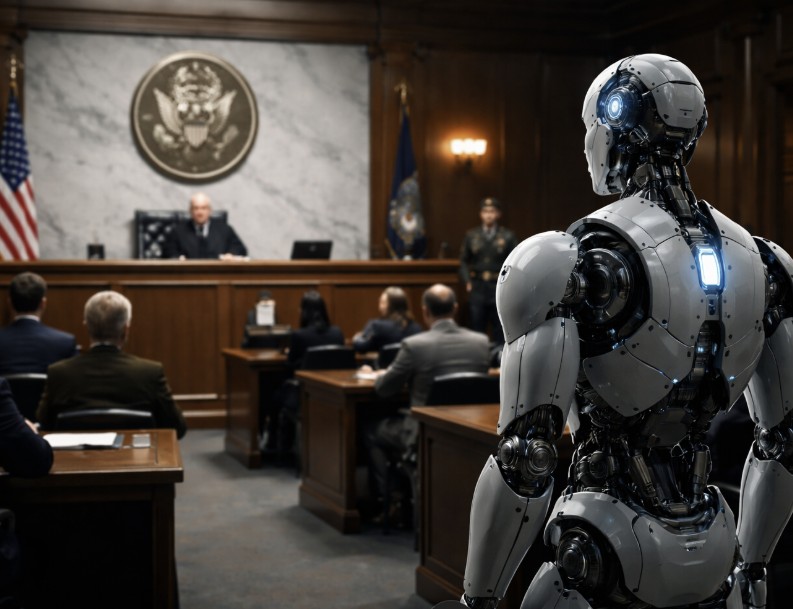

The conflict between AI company Anthropic and the U.S. government has escalated into a legal battle after the Department of Defense classified the company as a potential national security risk. This classification could have significant repercussions, effectively barring Anthropic from many government contracts and prompting agencies to avoid utilizing its technology.

In its lawsuit, Anthropic contends that the government’s decision is politically motivated, punishing the company for establishing ethical guidelines that limit military applications of its AI models. The company has publicly stated that its systems should not be used for mass surveillance of U.S. citizens or for fully autonomous weapons. In contrast, the Pentagon mandates that software suppliers grant rights of use for any lawful purpose, including military applications.

Notably, the classification of an American company as a potential national security threat is unusual; typically, such actions are directed at foreign entities or companies with ties to rival nations. Reports indicate that former President Donald Trump ordered federal agencies to cease using Anthropic technology until the alleged security concerns are addressed.

Anthropic argues that this designation infringes on its rights and entrepreneurial freedom, asserting that it is being penalized for outlining ethical boundaries for the use of its AI. The company believes that formulating these guidelines is an exercise of its right to freedom of expression.

While Anthropic challenges the government’s decision, OpenAI, the developer of ChatGPT, has seized the opportunity to fill the gap left by Anthropic. OpenAI has secured a contract with the Department of Defense to provide AI technology for government projects, potentially allowing it to assume Anthropic’s previous role within military contexts.

OpenAI CEO Sam Altman asserted that the company would adhere to government requirements but emphasized the implementation of technical safeguards designed to prevent misuse of the technology for mass surveillance of U.S. citizens. This partnership has reportedly led to internal tensions within OpenAI, resulting in the resignation of Caitlin Kalinowski, the head of robotics, in protest against the deal.

The dispute underscores a fundamental tension within the AI industry: while governments view AI as a strategic asset for defense and security, many developers caution against military applications lacking robust ethical guidelines. Anthropic CEO Dario Amodei has emerged as a prominent voice in this debate, emphasizing that modern AI models can aggregate vast amounts of publicly available data to create detailed profiles of individuals and arguing that the technology is not yet reliable enough for fully autonomous weapon systems.

Amodei elaborated in a blog post that Anthropic intentionally avoids developing or supplying products that could potentially endanger lives, stating that the company refrains from providing systems that could risk harm to American soldiers or civilians if misused.

The outcome of the lawsuit could have significant implications for the AI industry at large. Should the court side with Anthropic, it could restrict the U.S. government’s ability to compel technology companies to collaborate with military efforts. Conversely, a ruling in favor of the government would bolster state security interests in shaping AI development.

The legal clash between Anthropic and the U.S. government highlights the increasingly intertwined nature of geopolitical interests and technological advancement. As Washington increasingly prioritizes AI as a strategic resource, individual companies are striving to delineate clear lines regarding the military use of their systems. With OpenAI’s entrance into the market as a prospective alternative supplier, the competitive landscape for government AI contracts is undergoing a transformation. The resolution of this dispute may ultimately define the future relationship between technology firms and military and intelligence agencies.

See also China’s AI Strategy Gains Praise at Hong Kong Forum, Boosting Regional Adoption and Innovation

China’s AI Strategy Gains Praise at Hong Kong Forum, Boosting Regional Adoption and Innovation GSA Proposes Draft AI Contract Terms Granting Broad Usage Rights to Federal Agencies

GSA Proposes Draft AI Contract Terms Granting Broad Usage Rights to Federal Agencies OpenClaw Gains Traction Among Chinese Local Governments Amid Risk Warnings

OpenClaw Gains Traction Among Chinese Local Governments Amid Risk Warnings Germany Eyes Anthropic as US Blacklists AI Giant Amid Digital Sovereignty Debate

Germany Eyes Anthropic as US Blacklists AI Giant Amid Digital Sovereignty Debate India AI Impact Summit 2026: Government Spends Rs 65 Crore, $250B Investment Announced

India AI Impact Summit 2026: Government Spends Rs 65 Crore, $250B Investment Announced