As artificial intelligence (AI) continues to advance rapidly, concerns are mounting within the academic community regarding the potential redundancy of traditional research roles. This sentiment is particularly pronounced given the ongoing job losses within the university sector. The emergence of AI systems, including large language models (LLMs), is reshaping research practices at an unprecedented pace.

Despite these technological disruptions, the fundamental structure of research remains intact. Research across various fields—be it science, engineering, social sciences, or humanities—typically follows a six-stage process: selecting problems and questions; assessing existing knowledge; generating ideas and hypotheses; conducting research; analyzing results; and communicating findings. AI’s influence permeates every stage of this workflow, enhancing efficiency and effectiveness.

LLMs, for instance, excel in specific tasks such as literature reviews and idea generation, significantly speeding up these processes. However, human expertise is crucial in ensuring that the quality and rigor of research are maintained. While LLMs can identify highly cited papers and summarize key domain positions, the nature of influence in research remains complex and often contested. Scientific judgment goes beyond mere metrics or consensus, requiring nuanced understanding and interpretation.

When it comes to formulating research questions, LLMs can suggest plausible avenues that appear technically sound. Yet, without the domain expertise developed through rigorous study, these systems may struggle to assess which questions are genuinely significant and worthy of sustained inquiry. The transition from formulating questions to generating new knowledge necessitates critical human thinking, which involves challenging assumptions and exploring contradictions. Such intellectual engagement requires responsibility, imagination, and courage—qualities that AI systems, regardless of their sophistication, cannot replicate.

Proponents of AI note the rapid pace of technological advancement, suggesting that any limitations currently ascribed to AI may soon be outdated. However, as these systems grow more capable, the necessity for human oversight and responsibility becomes ever more paramount. While some instances of fully automated discovery may be feasible, it is neither a realistic nor desirable model for broader research practices. Capability without judgment could undermine trust in the research process.

Interactions between LLMs and human researchers can be most productive when they function as peer reviewers of each other’s work. Researchers are encouraged to leverage their expertise to evaluate AI outputs for usefulness, accuracy, and bias. One effective strategy is to prompt LLMs to explain their reasoning in detail, allowing researchers to scrutinize the logic behind their outputs. Conversely, LLMs can help challenge human assumptions, exposing flaws and providing alternative perspectives. In this collaborative dynamic, the goal is to stimulate critique rather than establish authority.

Structured prompting frameworks, such as RISEN (Role, Instruction, Steps, End, Narrowing), can facilitate a more systematic interaction between researchers and AI by embedding clarity, constraints, and critical intent into the process. This ensures that prompt design enhances the research endeavor rather than serving as a mere shortcut.

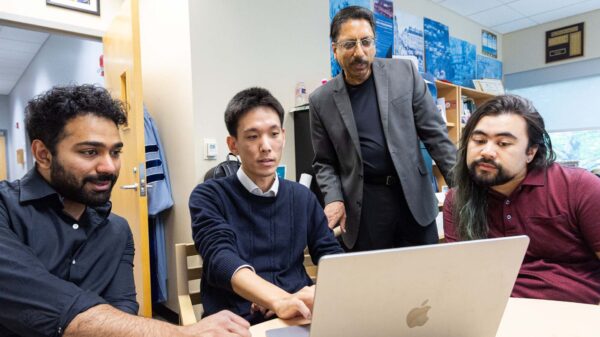

Changing Dynamics in PhD Supervision

One of the most significant shifts in academic practice is occurring in PhD supervision, evolving from a traditional two-way relationship between students and supervisors to a three-party dynamic that incorporates AI. In this new configuration, students provide curiosity and creativity, LLMs deliver computational insights and broad access to information, and supervisors bring wisdom, values, and judgment. This partnership underscores the importance of good supervision, which becomes increasingly vital in the age of AI.

Moreover, LLMs cannot assume authorship of research papers, as authorship is tied to intellectual contribution and accountability. Even when assisting in the research process, LLMs cannot be held responsible for the outcomes of research endeavors. As automation in research increases, humans are afforded more time to engage deeply with complex questions, fostering interdisciplinary integration and addressing societal impacts.

While environmental concerns surrounding AI usage are valid, human oversight remains critical. In many scientific contexts, AI does not operate independently; researchers guide its application, prioritizing promising hypotheses and effectively managing energy consumption. AI-assisted research holds the potential to expedite the discovery of innovative materials and technologies, which could lead to more efficient energy systems, thus contributing to sustainability efforts.

Beyond climate change, responsible AI use holds promise for addressing other significant societal challenges, enhancing health and social resilience. The primary risk lies not in AI’s capabilities but in human disengagement. If researchers maintain curiosity and accountability, integrating AI within a robust framework of peer review and transparent validation, these systems can serve as powerful tools that amplify human ingenuity rather than diminish it.

Bashir M. Al‑Hashimi is vice-president (research and innovation) at King’s College London. Nick Jennings is vice-chancellor of Loughborough University. LLMs were used as tools to support drafting, refinement, and critical exploration of ideas in this article. All interpretations, arguments, and conclusions are the authors’ own.

See also AI Guardrails Shape Conversations: New Study Reveals Their Impact on Digital Discourse

AI Guardrails Shape Conversations: New Study Reveals Their Impact on Digital Discourse Machine Learning and IoT Transform Factories with Real-Time Analytics and Cybersecurity Advances

Machine Learning and IoT Transform Factories with Real-Time Analytics and Cybersecurity Advances AWS Bedrock’s Code Interpreter Vulnerability Exposes Sensitive Data Risk, Researchers Warn

AWS Bedrock’s Code Interpreter Vulnerability Exposes Sensitive Data Risk, Researchers Warn Anthropic Launches Institute to Analyze Economic Risks of Advanced AI Systems

Anthropic Launches Institute to Analyze Economic Risks of Advanced AI Systems Australian Entrepreneur Develops Personalized mRNA Cancer Vaccine for Dog Using ChatGPT

Australian Entrepreneur Develops Personalized mRNA Cancer Vaccine for Dog Using ChatGPT