A detailed legal opinion published yesterday has raised serious concerns regarding the UK Home Office’s use of artificial intelligence (AI) in its asylum decision-making process, suggesting certain practices could be unlawful, especially when applicants are not informed that AI tools are being employed. The opinion was prepared by barristers from Cloisters Chambers, including Robin Allen KC and Dee Masters, alongside Joshua Jackson from Doughty Street Chambers, and was commissioned by the non-profit organization Open Rights Group.

The legal analysis argues that the Home Office’s reliance on generative AI tools in processing asylum applications may infringe on legal obligations related to procedural fairness, data protection, and equality law. According to the authors, this opinion could empower asylum seekers to challenge decisions where AI tools were utilized in evaluating their claims. The Open Rights Group emphasizes that this conclusion opens avenues for legal challenges by asylum applicants, particularly those who suspect that AI influenced the assessments determining their eligibility for protection in the UK.

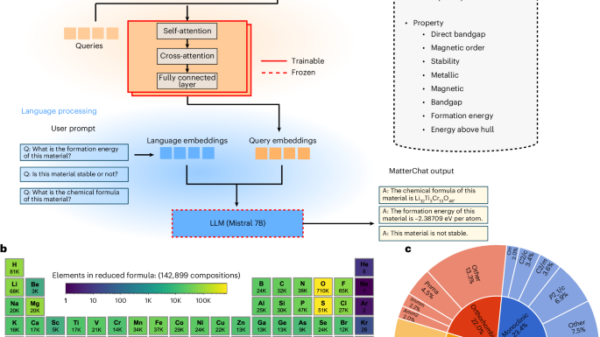

The Home Office reportedly employs two generative AI tools during the asylum process: the Asylum Case Summarisation (ACS) tool, which summarises information provided by applicants during interviews, and the Asylum Policy Search (APS) tool, which assists caseworkers in locating country-of-origin information. The authors of the opinion highlight that both tools create new text for decision-makers rather than merely organizing existing information. This raises concerns that important details may be filtered out, affecting the quality of the decisions made. Furthermore, the outputs generated by these tools are not shared with asylum seekers, nor are they informed of the AI’s involvement in their applications.

Critiques of the accuracy of these AI tools were also included in the opinion, which noted that a pilot study found the ACS tool produced inaccurate summaries 9% of the time, and that 5% of APS users expressed uncertainty regarding its accuracy. The authors noted a significant lack of publicly available information regarding how the accuracy of these systems is measured or evaluated, as well as whether adequate safeguards exist to prevent errors from influencing asylum decisions.

The opinion stresses that the Home Office may have a heightened duty to scrutinize the performance and implications of these AI tools before deploying them in asylum determinations. It points out that failing to adequately assess the accuracy, potential bias, or discrimination risks of these tools could result in violations of the department’s Tameside duty of inquiry.

Concern is also mounted regarding the potential impact of AI on the reasoning procedures of decision-makers. Established public law principles require that caseworkers consider all relevant evidence, including applicants’ testimonies and pertinent country-of-origin materials. If decision-makers overly rely on AI-generated summaries, there is a “significant risk” that vital considerations may be overlooked, leading to decisions based on “material errors of fact.” The absence of safeguards to cross-check AI outputs against original materials only intensifies this concern, especially since applicants are not granted access to these summaries to identify potential inaccuracies.

The opinion underscores the necessity of transparency in this context. Given the serious ramifications for asylum seekers whose claims may be determined based on flawed information, the authors argue that these individuals should be made aware of AI’s use in their claim assessments, provided with outputs from AI-generated summaries, and informed about how the technology is being employed in the decision-making process.

The authors state: “[G]iven the gravity of the consequences for asylum-seekers if their claims are determined on the basis of inaccurate information and the nature of the interests at stake, we consider that – as a matter of procedural fairness – asylum-seekers have a common law right to be informed that AI is being used in the determination of their claims, how it is being used, and to be provided with the output of the AI-generated summaries.” This assertion is particularly pertinent to the ACS tool, which summarizes sensitive information provided by asylum seekers that they may be well-positioned to correct.

In addition to procedural fairness concerns, the opinion highlights potential risks associated with data protection and equality. As the ACS tool processes sensitive personal data—including race, religion, political beliefs, and sexual orientation—it raises obligations under the UK GDPR to maintain transparency, accuracy, and access. The lack of a published Equality Impact Assessment further indicates that the Home Office has not sufficiently demonstrated compliance with the Public Sector Equality Duty or assessed the tools for potential discriminatory effects.

Lastly, the authors emphasize the limited oversight currently exercised by civil society and regulators, such as the Independent Chief Inspector of Borders and Immigration. This lack of scrutiny diminishes accountability and public oversight of the technologies being used. Allen and Masters commented, “If AI tools are influencing asylum decisions, there must be full transparency about how those systems operate and how their outputs are used. Without that transparency, it becomes extremely difficult to ensure that decisions affecting fundamental rights are lawful and fair.” As discussions surrounding the role of AI in critical decision-making processes continue, this legal opinion may prompt significant changes in how the Home Office employs technology in asylum determinations.

See also AAA Unveils AI Arbitrator for Construction Cases Amid Lawyer Skepticism

AAA Unveils AI Arbitrator for Construction Cases Amid Lawyer Skepticism Custom AI Will Drive 50% of Cyber Incidents by 2028, Warns Gartner Report

Custom AI Will Drive 50% of Cyber Incidents by 2028, Warns Gartner Report Ward and Smith’s Mayukh Sircar Reveals Key Strategies for AI Governance at In-House Counsel Seminar

Ward and Smith’s Mayukh Sircar Reveals Key Strategies for AI Governance at In-House Counsel Seminar Steward Secures $5M to Enhance AI-Powered Compliance Platform for Investor Monitoring

Steward Secures $5M to Enhance AI-Powered Compliance Platform for Investor Monitoring AI Tools Streamline Legal History Research with Advanced Translation and Citation Features

AI Tools Streamline Legal History Research with Advanced Translation and Citation Features