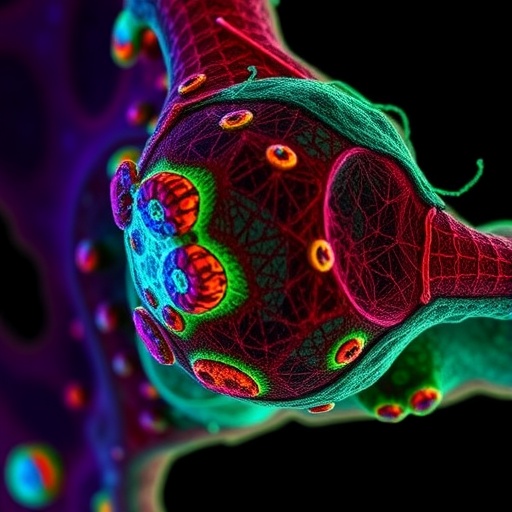

Researchers from Harbin Institute of Technology and Peking University have unveiled a significant advancement in super-resolution fluorescence microscopy, tackling a persistent issue in cellular imaging: background artifacts that hinder quantitative analysis. Their innovative technique, dubbed the Adaptive Self-inspired Noise2Noise (Adaptive-SN2N) framework, marks a transformative shift in the denoising of noisy images, allowing for enhanced visualization and segmentation of subcellular structures, including mitochondria and the endoplasmic reticulum (ER), with unprecedented clarity and reliability.

Fluorescence microscopy plays a critical role in observing cellular dynamics but often faces challenges in balancing adequate illumination with the risks of photobleaching and phototoxicity. To mitigate these risks during extended live-cell imaging, researchers typically reduce light exposure, resulting in low photon counts that compromise image quality through a diminished signal-to-noise ratio (SNR). Traditional denoising methods, including self-supervised deep learning models like Noise2Noise and Noise2Void, have improved the need for clean reference images while demonstrating commendable photon efficiency. However, these methods are also susceptible to creating artificial structures in areas devoid of signals, which can mislead biological interpretations and subsequent computational analyses.

The root of the problem lies in common preprocessing techniques, particularly the frequently used “patch-wise normalization.” This method can amplify subtle random noise in background regions by accentuating local statistical discrepancies, leading neural networks to misidentify this noise as legitimate biological features. In images with sparse biological signals or predominantly dark backgrounds, these artifacts can result in false positives during processes like organelle segmentation and synaptic pattern recognition, ultimately undermining the scientific validity of the findings.

To address these challenges, the research team embarked on an interdisciplinary approach that melded expertise in optical super-resolution imaging, algorithmic deep learning, and cell biology. Through rigorous mathematical analysis, they uncovered how patch-wise normalization can inflate noise variance in low dynamic range patches by a factor that is inversely proportional to the square of the patch’s local intensity range. This effect stretches subtle background noise across the normalized intensity interval [0,1], leading to the formation of artificial structures. In contrast, high-intensity sparse patches experience a compression of background pixels into negligible intensity ranges, resulting in significant shifts in data distributions that complicate effective denoising.

The Adaptive-SN2N framework presents a paradigm shift with its risk-aware adaptive normalization strategy. By quantitatively assessing each image patch’s statistical risk profile—which includes factors such as mean intensity, standard deviation, and skewness—the algorithm dynamically chooses the optimal normalization format. For high-risk patches, where weak backgrounds or sparse bright signals prevail, it employs image-wise global normalization in lieu of traditional methods. Conversely, in low-risk patches rich in structural information, localized patch-wise normalization is retained to enhance contextual contrast and maintain fidelity of fine details.

In conjunction with this, Adaptive-SN2N employs a self-inspired learning regimen that utilizes a single noisy image. By implementing diagonal spatial resampling and Fourier domain interpolation, the framework generates twin image pairs that share identical biological content but feature independent noise realizations. This ingenious dual-image generation negates the need for clean ground-truth references, allowing for a self-constrained training process that refines denoising performance while safeguarding against artifact creation. This strategy exemplifies the evolution of unsupervised and self-supervised machine learning tailored for biological imaging.

The team also addressed boundary discontinuities and stitching artifacts typically found in patch-based inference by incorporating a Gaussian-weighted overlap across sliding windows. Using a 50% overlap rate alongside smooth 2D Gaussian weighting functions, predicted image segments are integrated seamlessly, eliminating abrupt transitions and ensuring spatial coherence. Comprehensive ablation studies confirmed that this approach significantly enhances denoising quality across multiple datasets and metrics, showcasing its robust generalizability.

Experimental validations on both structured illumination microscopy (SIM) and spinning-disk SIM platforms highlighted the superior capabilities of Adaptive-SN2N in complex live-cell dual-color imaging. The framework significantly reduced false-segmentation in mitochondrial background areas during Otsu thresholding, a common automated segmentation challenge. Additionally, it markedly improved the morphological continuity of the ER skeleton, transforming it from a fragmented baseline into a cohesive, topologically faithful network. This enhanced fidelity allows for precise tracking of dynamic mitochondrial fission and fusion events, which are vital for comprehending cellular physiology and pathology.

Notably, the Adaptive-SN2N framework achieves these advancements without sacrificing photon efficiency, maintaining performance improvements of one to two orders of magnitude compared to conventional methods while fundamentally suppressing background artifacts at their source. This represents a crucial milestone in computational microscopy, balancing the demands of noise suppression with structural authenticity and phototoxicity mitigation.

Looking ahead, the principles of risk-aware adaptive processing may have transformative implications across a range of computational microscopy tasks beyond denoising. Anticipated applications include enhanced image segmentation, precise organelle colocalization analysis, and rigorous assessments of subcellular interactions. In neuroscience, artifact-free, high-SNR imaging will facilitate high-density synaptic tracking, while in oncology, it will enable the detection of minute metastatic protrusions with unprecedented reliability.

By eliminating misleading artifacts that have historically complicated image interpretation, Adaptive-SN2N paves the way for quantitative life science research built on the foundations of data authenticity and reproducibility. As the demands for imaging resolution and analytical complexity continue to rise, this landmark framework is well-positioned to support a new wave of discoveries at the intersection of biology, optics, and machine intelligence.

See also Google’s AMIE AI Achieves Doctor-Level Diagnostic Insights in Urgent Care Study

Google’s AMIE AI Achieves Doctor-Level Diagnostic Insights in Urgent Care Study Norm Ai Launches Legal AGI Lab to Shape AI Governance in High-Stakes Industries

Norm Ai Launches Legal AGI Lab to Shape AI Governance in High-Stakes Industries NSWCPD Applies AI to Predictive Maintenance, Enhancing Submarine Machinery Health

NSWCPD Applies AI to Predictive Maintenance, Enhancing Submarine Machinery Health 70% of Logistics Professionals Anticipate AI Transformation in Transport by 2026, Says Microlise

70% of Logistics Professionals Anticipate AI Transformation in Transport by 2026, Says Microlise OpenAI Launches GPT-Rosalind to Accelerate Drug Discovery and Biological Research

OpenAI Launches GPT-Rosalind to Accelerate Drug Discovery and Biological Research