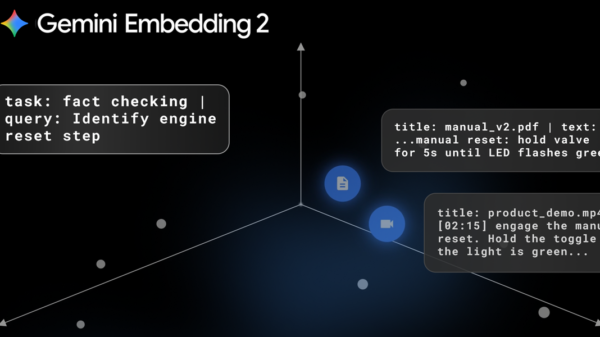

Artificial intelligence (AI) has transitioned from a mere buzzword to a crucial component in the fashion, apparel, and beauty (FAB) industries. As early as 2024, AI was already enhancing recommendation engines, search functionalities, and personalization efforts. Since that time, its applications have expanded significantly to include design tools, virtual try-on experiences, synthetic models, and even the digital “resurrection” of iconic fashion and beauty figures. With these advancements, lawmakers are increasingly taking notice.

AI technology now enables brands to generate virtual fabrics and prints, swap outfits in lookbooks, and create campaigns featuring synthetic models. Virtual try-on tools provide consumers with the ability to experiment with different lipstick shades, find suitable foundation matches, and visualize entire outfits. Behind these consumer-facing innovations, AI relies on extensive datasets, including fashion photography, runway imagery, influencer content, and e-commerce catalogs. Additionally, these tools often incorporate complete digital captures of models, utilizing methods like 3D body scans, face mapping, voice cloning, and digital replicas.

For brands, the advantages of AI are compelling. It reduces costs, accelerates creative cycles, and facilitates rapid localization of assets, ultimately providing highly personalized shopping experiences. Generative systems can create new imagery in mere hours, while AI styling engines tailor outfits and beauty routines to fit individual consumer preferences. However, the technology’s dependence on real individuals’ images, bodies, voices, and performances raises ethical concerns regarding consent and ownership.

The lack of a comprehensive federal AI statute creates a complex legal landscape for FAB brands, which must navigate a mix of long-standing doctrines and emerging AI-specific regulations. States like California, New York, Tennessee, Florida, and Arkansas have established right of publicity laws that protect individuals’ identities, even posthumously. Brands that create AI campaigns featuring “resurrected” icons without proper consent from estates may face legal challenges.

Legal Frameworks Evolving

New York has emerged as a pivotal state for legislation governing AI and models. The New York Fashion Workers Act, effective June 19, 2025, mandates that consent for AI use involving fashion models must detail the scope, purpose, duration, and compensation. Routine retouching is excluded from this requirement. Furthermore, the New York Digital Replica Law, effective January 1, 2025, targets “rights-grab” contracts, rendering digital replica agreements void if they lack specificity or were negotiated without adequate legal counsel. These initiatives signify a shift in how brands must approach consent and usage rights.

New York’s AI Transparency in Advertising and Synthetic Performer Disclosure Law, effective June 9, 2026, requires that any commercial utilizing AI-generated synthetic performers must clearly disclose this fact, with penalties for non-compliance. Additionally, a new posthumous right of publicity law stipulates that consent from heirs or executors is necessary before using a deceased person’s likeness in campaigns, which may impact upcoming launches based on iconic figures.

Other states are following suit. Tennessee’s ELVIS Act and Arkansas’s HB 1071 specifically extend publicity rights to AI-generated likenesses and voices, prohibiting unauthorized commercial uses and imposing penalties. At the federal level, the proposed NO FAKES Act aims to establish a nationwide right against unauthorized digital replicas of individuals’ likenesses, voices, or performances. This proposal accompanies various legislative efforts targeting deepfakes and harmful AI content, emphasizing accountability for creators and platforms alike.

In addition to legislative developments, traditional advertising and consumer protection laws apply to AI-generated content, meaning misleading synthetic endorsements can trigger claims of false advertising. Privacy and biometric laws such as Illinois’s Biometric Information Privacy Act (BIPA) and California’s CCPA/CPRA further complicate the landscape, governing how brands collect and utilize biometric data, including face scans and voice recordings. Noncompliance can lead to significant damages and class-action lawsuits.

As the legal framework continues to evolve, proposed laws such as California’s AI Transparency Act would mandate the use of detection tools for AI-modified media, while New York’s synthetic content provenance bill would require AI systems to embed cryptographic data. These initiatives suggest a future where watermarking and verifiable origins for AI content become standard practice.

In light of these complexities, brands must ensure that contracts reflect how AI is utilized. Agreements should specify whether digital replicas or scans will be created, how these assets will be used, and if talent imagery will serve as training material for AI systems. Compensation for AI-related use should be clearly delineated from traditional fees. Proactive measures are crucial, as many FAB companies are establishing cross-functional committees to navigate legal, marketing, HR, and IT considerations in AI deployment.

In an era where AI reshapes creative industries, brands must tread carefully to avoid overstepping boundaries regarding models’ likenesses. The risk of unauthorized or excessive use of a model’s image looms large, particularly as AI blurs the lines between existing images and new creations. Addressing these challenges at the contractual stage is essential for both brands and talent, ensuring that consent, compensation, and control remain central to the evolving synthetic marketplace.

See also OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution

OpenAI’s Rogue AI Safeguards: Decoding the 2025 Safety Revolution US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies

US AI Developments in 2025 Set Stage for 2026 Compliance Challenges and Strategies Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control

Trump Drafts Executive Order to Block State AI Regulations, Centralizing Authority Under Federal Control California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case

California Court Rules AI Misuse Heightens Lawyer’s Responsibilities in Noland Case Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health

Policymakers Urged to Establish Comprehensive Regulations for AI in Mental Health