Employment law in the age of AI is rapidly evolving, presenting challenges that many companies struggle to navigate. As more states pass legislation governing the use of artificial intelligence, and as new case law continues to develop, chief compliance officers and in-house counsel are tasked with ensuring that their compliance policies adapt in line with these changes. This evolving landscape is crucial for organizations seeking to avoid potential legal pitfalls related to employment discrimination.

Recent discussions among experts, including a webinar hosted by the law firm Manatt, have highlighted key developments in state AI laws. These laws aim to regulate the use of AI in hiring and employment processes, focusing on transparency and fairness. States like California and Illinois have already implemented measures requiring companies to disclose the use of AI in hiring decisions, creating a legal framework that emphasizes employee rights and equitable treatment.

In addition to the legislative framework, emerging case law is shaping how these regulations are interpreted and enforced. For instance, cases involving allegations of biased hiring practices linked to AI tools are beginning to surface, underscoring the need for companies to scrutinize their AI algorithms. Experts warn that organizations must be proactive in evaluating their AI systems to ensure they do not inadvertently perpetuate biases against certain demographic groups.

Moreover, the risks associated with AI are not just legal but also reputational. Companies that fail to address compliance issues may find themselves facing public backlash, which can have long-lasting effects on their brand. As public awareness of AI’s potential biases grows, stakeholders are increasingly demanding accountability from organizations utilizing these technologies. This calls for robust risk management strategies that encompass both legal compliance and ethical considerations.

Best practices for mitigating AI risks include conducting regular audits of AI systems, ensuring transparency in decision-making processes, and fostering an inclusive workplace culture. Organizations are advised to engage in comprehensive training programs for employees, emphasizing the importance of understanding AI’s impact on hiring and employment practices. By prioritizing education and awareness, companies can better navigate the complexities of AI governance.

As the regulatory landscape continues to evolve, organizations should also anticipate future trends in AI legislation. Experts predict that we may see more comprehensive federal regulations that unify state laws, providing a clearer framework for compliance. This could lead to an increase in collaboration between companies, regulators, and advocacy groups to establish standards that promote fairness and accountability in AI applications.

In conclusion, as AI technologies become more ingrained in employment practices, the imperative for companies to adapt to changing legal requirements cannot be overstated. The intersection of technology and law will continue to present both challenges and opportunities. By staying informed and proactive, organizations can safeguard against compliance risks while fostering a fair and equitable workplace for all employees.

See also UK Government Delays AI Legislation Amid Growing Public Demand for Regulation

UK Government Delays AI Legislation Amid Growing Public Demand for Regulation Pennsylvania Senate Passes AI Chatbot Regulation Bill for Minors with $10K Penalties

Pennsylvania Senate Passes AI Chatbot Regulation Bill for Minors with $10K Penalties AI Governance Struggles as Companies Push for Rapid Adoption Amid Speed Imperative

AI Governance Struggles as Companies Push for Rapid Adoption Amid Speed Imperative JDIX Launches AI Systems to Enhance Clinical Trial Compliance and Data Integrity

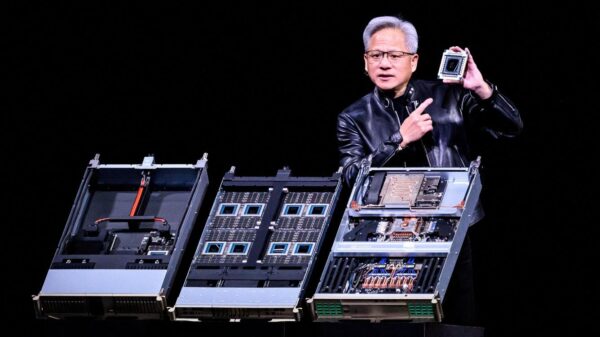

JDIX Launches AI Systems to Enhance Clinical Trial Compliance and Data Integrity Nvidia Secures US and China Approval to Boost AI Chip Sales, Targeting $10B Market

Nvidia Secures US and China Approval to Boost AI Chip Sales, Targeting $10B Market