In a recent commentary, a former Facebook executive reflected on the social media giant’s journey from its inception under Mark Zuckerberg to its current state, where user engagement often trumps ethical considerations. This shift, he argues, mirrors the race among tech companies in the artificial intelligence sector, where the logic of “if we don’t do it, someone else will” dominates decision-making.

The potential of AI to create unprecedented abundance stands in stark contrast to the risks of an unregulated landscape, suggesting a pivotal moment in shaping our collective future. A White House framework introduced last week proposed a familiar approach: shielding AI companies from liability and allowing them to self-regulate. However, the commentary stresses that true public interest demands a shift in governance, placing the public in charge of AI development.

AI technology is already impacting daily life, influencing job opportunities, loan approvals, and even military targets, often without public input or oversight. As tech leaders like Sam Altman, Dario Amodei, Demis Hassabis, Elon Musk, and Zuckerberg navigate this competitive environment, concerns around safety and ethical implications grow. This urgency underscores the need for regulatory change to ensure AI serves societal interests rather than purely commercial ones.

Public sentiment reflects a desire for involvement in AI governance. Polling by Blue Rose Research reveals that 66% of Americans support citizen panels to help set AI regulations, a sentiment that transcends political affiliations. Furthermore, 79% of respondents expressed worry about the lack of governmental planning for potential job losses driven by AI advancements, indicating a populace that is not apathetic but rather feels excluded from the conversation.

Democratizing AI Oversight

Advocates for public control argue that “the public in charge” should not simply mean elections influenced by money and lobbyists. Instead, it suggests the establishment of citizens’ assemblies—representative groups of everyday individuals who engage in informed deliberation and are granted real authority to set goals and constraints for AI development. These assemblies could ensure that while citizens may not directly code the technology, they have significant input on its purposes and ethical boundaries.

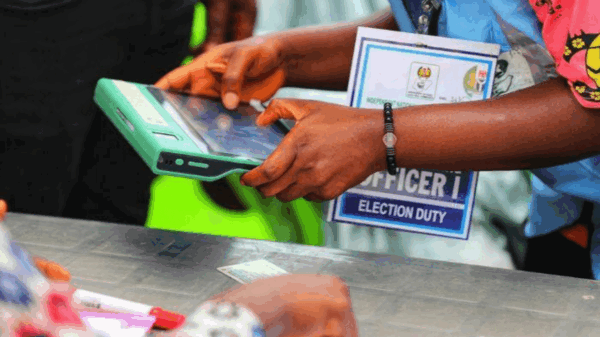

Historical precedents for this model exist, with successful citizens’ assemblies helping to resolve contentious issues in countries like Ireland. In the AI context, assemblies have already begun to shape policy in places such as Taiwan, the UK, and Belgium, addressing issues ranging from facial recognition to job displacement. Unlike elected officials, ordinary citizens in these assemblies have no financial donors to please or reelection campaigns to consider, allowing them to focus solely on public welfare.

When left to market forces, AI tends to prioritize engagement and profitability, often at the expense of ethical considerations. Democratic governance, conversely, could steer AI toward enhancing public health, worker empowerment, and societal well-being. Such governance would require a robust framework that ensures AI resources are allocated equitably, addressing critical areas such as childcare, elder care, and retraining programs for displaced workers.

As discussions around public oversight of technology gain traction, infrastructure is already being developed to facilitate this model of governance. Initiatives like One Project, a non-profit organization, are working toward creating participatory platforms that enable democratic engagement on a large scale. These efforts echo the public trust principles that govern shared resources like airwaves and waterways, advocating for AI to be treated as a public asset rather than a mere profit-driven commodity.

With AI set to generate trillions of dollars in new wealth, harnessing its potential for the greater good necessitates public control, ensuring that society benefits rather than just shareholders. The urgency for democratic oversight is compounded by ongoing debates in Washington, where the narrative suggests that divisiveness and technical complexities hinder meaningful public involvement in AI governance. Nonetheless, cross-partisan support exists, and the question remains whether society will assert its demand for democratic input before AI becomes entrenched in the same profit-centric path as social media.

If AI is to reshape lives globally, it is imperative that the public has a decisive role in determining its trajectory. This notion, far from radical, embodies the essence of self-governance. As the window for intervention narrows, the call for democratic oversight of AI has never been more crucial.

See also Iowa Lawmakers Propose New Regulations to Expand AI Adoption Statewide

Iowa Lawmakers Propose New Regulations to Expand AI Adoption Statewide EU Delays AI Act Compliance Deadlines to December 2027, Targets Harmful Uses Like ‘Nudify’ Apps

EU Delays AI Act Compliance Deadlines to December 2027, Targets Harmful Uses Like ‘Nudify’ Apps China’s AI Governance Strategies Unveiled at Zhongguancun Forum, Emphasize Inclusiveness

China’s AI Governance Strategies Unveiled at Zhongguancun Forum, Emphasize Inclusiveness Study Reveals AI Ethics Declarations Lack Enforcement, Risking Symbolic Compliance

Study Reveals AI Ethics Declarations Lack Enforcement, Risking Symbolic Compliance AI Empowers Government Efficiency: New Tools Cut Application Processing from 30 Minutes to 5 Seconds

AI Empowers Government Efficiency: New Tools Cut Application Processing from 30 Minutes to 5 Seconds