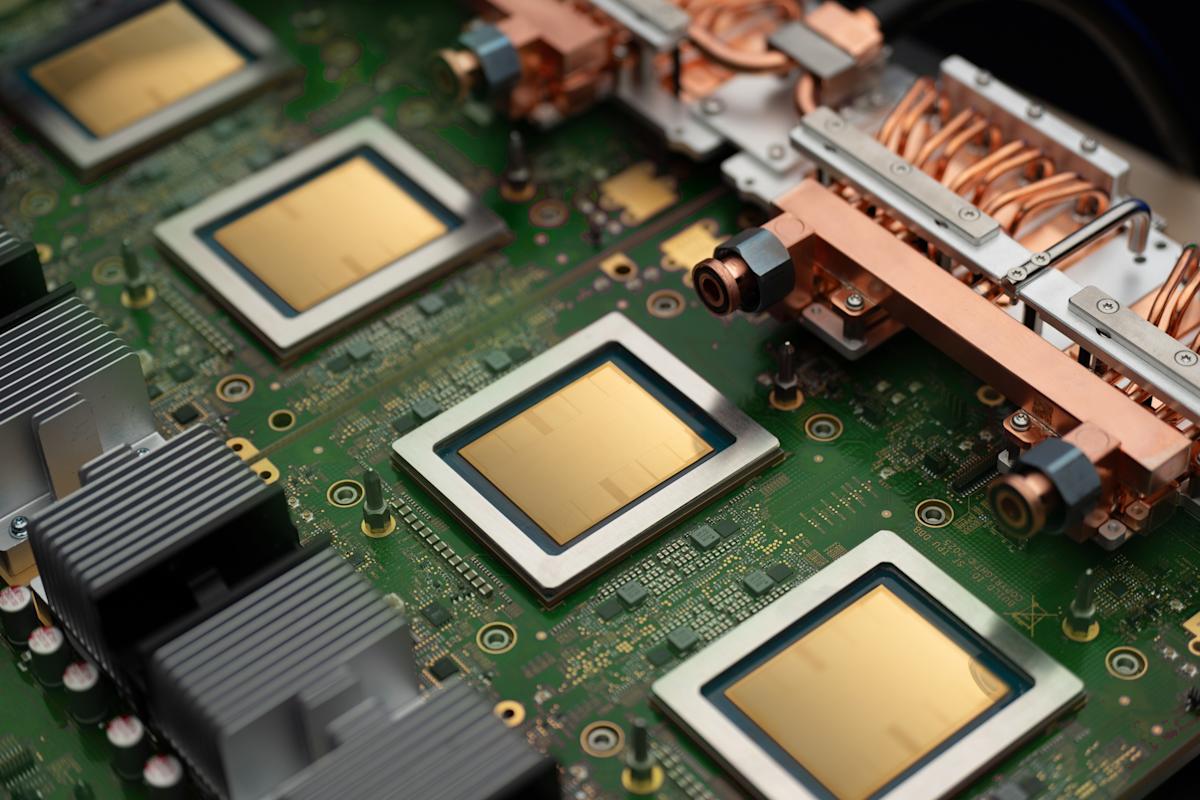

Google (GOOG, GOOGL) unveiled two new artificial intelligence processors, the TPU 8t and TPU 8i, at its Google Cloud Next 2026 conference in Las Vegas on Wednesday. This launch signifies Google’s ongoing efforts to compete with industry heavyweights such as Nvidia (NVDA) and AMD (AMD) in the rapidly evolving AI market. Earlier this month, the company announced an expanded partnership with Anthropic (ANTH.PVT), pledging to provide “multiple gigawatts of next-generation TPU capacity” to the AI lab.

In what appears to be a strategic move to cement its position in the sector, Google is also supplying TPU capacity to rival OpenAI (OPAI.PVT) to bolster that organization’s AI solutions. Reports from February indicated that Meta (META) has entered into a multiyear, multibillion-dollar agreement for access to Google’s TPUs, demonstrating how major tech firms are increasingly reliant on Google’s silicon capabilities.

According to Google, the TPU 8t is specifically optimized for training AI models, capable of significantly reducing the frontier model development cycle from months to weeks. This chip boasts a 2.8x improvement in price-to-performance ratio compared to its predecessor, an essential consideration for customers seeking powerful chips without incurring exorbitant operational costs.

Meanwhile, the TPU 8i is designed primarily for inferencing and managing AI agents, providing a robust solution for running AI models. Both chips are set to be available later this year, further solidifying Google’s foothold in the competitive AI landscape.

The expansion of capabilities in AI chip production by hyperscalers like Google, Amazon (AMZN), and Microsoft (MSFT) indicates a notable shift in the industry. Companies such as Meta are also developing their own AI chips, with its Meta Inference and Training Accelerator (MTIA) series aiming to rival Nvidia’s top offerings. Amazon recently announced an expanded chip deal with Anthropic, which involves more than $100 billion in spending on AWS technologies over the next decade.

This trend of major companies developing their own chips poses a potential challenge for Nvidia and AMD. In its latest quarter, Nvidia reported that hyperscalers represented slightly over 50% of its total data center revenue. Given that Nvidia’s data center segment is its most lucrative business, accounting for $193.7 billion out of $215.9 billion in total sales in fiscal 2026, these developments could significantly impact its revenue streams.

Despite these challenges, Nvidia has consistently pushed back against claims that its customers’ self-developed chips could pose a strategic threat. The company maintains that its processors are versatile and reprogrammable for a variety of workloads, allowing them to remain competitive even as customers explore alternatives.

As the landscape of AI continues to evolve, the introduction of Google’s TPU 8t and TPU 8i highlights the escalating competition among tech giants. With partnerships and proprietary chip developments becoming increasingly common, the next phase of AI advancements will likely be marked by companies seeking to leverage their own hardware to enhance performance and reduce costs. This ongoing trend may reshape the dynamics of the AI industry, potentially altering the competitive balance among key players.

See also AI Will Transform Financial Markets, Says SBI Chairman CS Setty at CCIL Anniversary

AI Will Transform Financial Markets, Says SBI Chairman CS Setty at CCIL Anniversary Pakistani Enterprises Set to Transform Finance with AI by 2026, Boosting Accuracy 20%

Pakistani Enterprises Set to Transform Finance with AI by 2026, Boosting Accuracy 20% SpaceX Announces $60 Billion Option to Acquire AI Startup Cursor to Enhance Coding Tools

SpaceX Announces $60 Billion Option to Acquire AI Startup Cursor to Enhance Coding Tools BlackLine Launches Agentic Financial Operations to Enhance Compliance and Control

BlackLine Launches Agentic Financial Operations to Enhance Compliance and Control AI Data Centers Surge in UK, Driving £8-15M Acre Land Values Amid Demand

AI Data Centers Surge in UK, Driving £8-15M Acre Land Values Amid Demand